Like any company, Automattic is constantly on a journey to get better: sometimes we have the good fortune of finding improvement in leaps and bounds, but most of the time, we move slowly, we make small changes, finding iterative wins and moving down the to‑do list.

I think probably this is how most progress happens: the same way Hemingway described going broke — gradually, then suddenly.

One of the big‑picture initiatives we’re undertaking at the moment is the pursuit of greater insights from our mountains of data. We’re not alone in this pursuit, it’s a common objective among software companies especially, and as such there are heaps of third party applications and consultants available to help you along your way: we chose a tool called Looker, for reasons I go into here.

We’ve had the good fortune of bringing back a former Automattician Matt Mazur in a consulting role to help us get our Looker instance going, along with of course Looker’s professional services organization.

Even with these resources, there’s always more to learn. Especially when it comes to reporting, an organization’s output will depend on a robust and trustworthy pipeline: if your reports proudly display a lovely scatterplot with totally wrong underlying data points due to an error upstream, that’s a problem. That’s a real problem!

So, I set forth from my home in New York’s Hudson Valley, to join my first ever Looker NYC Meetup — which was luckily on the topic of Data Engineering and infrastructure, my exact curiosity. A quick drive to Poughkeepsie and a light rail ride into Manhattan, and I was in the heart of it.

The evening’s panel was made up of some serious expertise: Jonathan Lenaghan, Director of Engineering at Datadog, Sean McIntyre, Lead Data Engineer at Warby Parker, Lenny Fishler, the Data Strategy Manager at Braze, and Dylan Baker a hired gun freelance analyst with DBAnalytics.

It was your classic panel discussion: questions and answers, some from the moderator Namely’s, and some excellent discussion of divergent approaches to similar problems. It was satisfying and interesting to see the different‑but‑still‑successful tactics different members of the panel took to solving their largely parallel data pipeline issues.

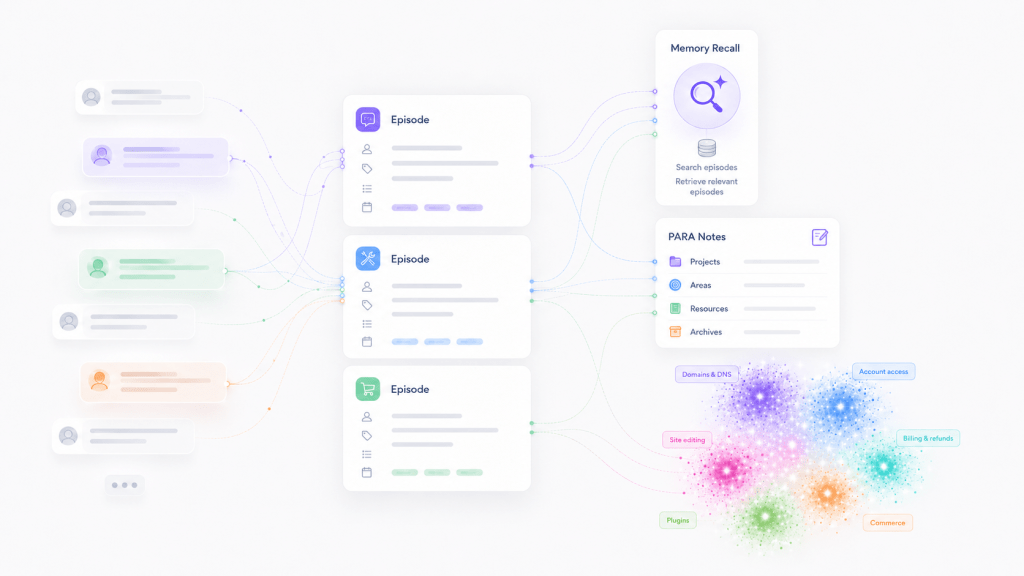

One topic that arose more than once was the evolving nature of data engineering: Dylan envisioned a future where entire data pipelines could be engaged without writing a line of Python, leveraging instead third party services like Five Tran and business intelligence tools like Looker. All of the folks present agreed that outsourcing data engineering tasks to outside platforms was a big part of their pipeline.

This is interesting as it can be seen as an industry wide shift in response to the dearth of experienced data engineers – since many companies are unable to hire the in‑house resources they might prefer, they have to find other ways to fulfill their pipeline needs, and that’s where these vendors step in.

Also worth mentioning is the idea of Looker‑as‑prototype: Looker puts a tremendous amount of data transformation power in the hands of the data analyst or LookML developer, but leveraging that power can leave the resulting data difficult for other parts of the organization to access. Using Looker to try out different transformations before pushing them upstream to earlier ETL processes was a common and unexpected use case.

The whole experience was such a reminder that problems that seem so imminent and novel are rarely as unique as they may appear: finding and leveraging a community of practice can totally change your perspective. Even one evening in a room of bright, thoughtful folks working hard on the same problems that we are was both encouraging and validating.

I came home on the late train excited to get back to work: whatever you’re working on, if you find that you’re feeling stuck, let me offer you this real‑life cheat code: find a way to get yourself surrounded by like‑minded folks in your community of practice. Have a coffee, have a beer, and have yourself a chat.

Leave a comment